Understanding the Challenges of Data Migration

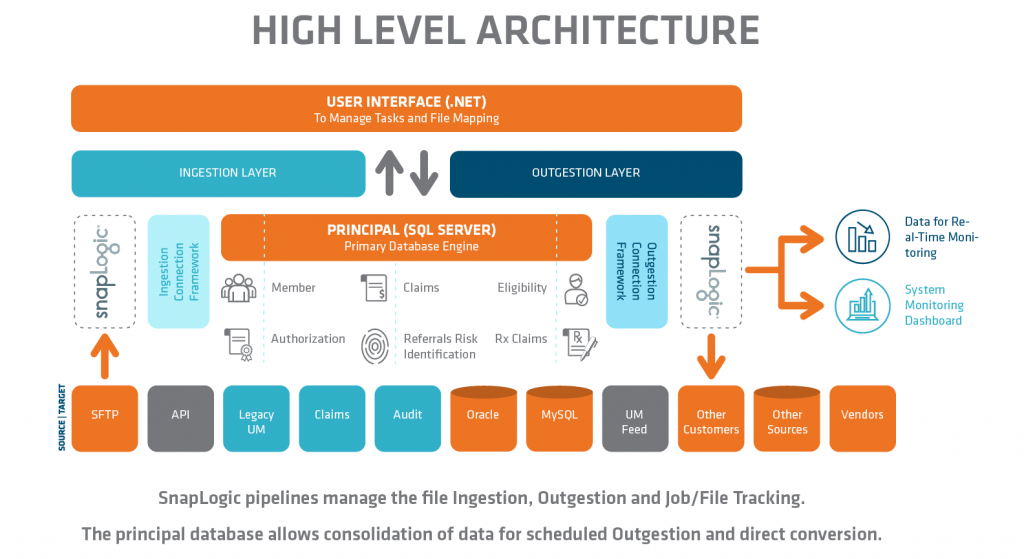

emids delivered a GUI-based ETL middleware tool which leveraged Microsoft SQLServer, Snaplogic and a .Net interface.

Overview

One of the main reasons many organizations, especially at the enterprise level, continue to use older or legacy systems is the challenge of updating all of the “jobs” or interfaces which move data into and out of those systems. From years of work and work-arounds, these interfaces become complex and patched with custom coding. Much of the cost, time and risk in any digital transformation is found in this area making data migration one of the greatest challenges. The underlying process is called Extract Transform and Load or ETL.

At the same time, interface work is often thought of as basic blocking and tackling work in many IT departments and is often not considered as prominently as other parts of the system modernization. This is combined with the reluctance of data trading partners to change the incoming feeds or doing so at a cost for many of the same reasons. The basic work of ETL can often become a drag on implementations due to the effort involved in both documenting and accommodating clients who also are slow to change.

Business rules, or the Transformation component of ETL, can end up in multiple IT systems and either lost in layers or trapped in legacy systems. Because they are built over time, there is a tendency to either add on to a very long and complex “rule” or to create a new “purpose-driven” task each time. Either path adds to the complexity and creates a process dilemma. The skills and the knowledge for transformation work are often split between the technical IT and business analysts. This often leads to having purpose-driven interfaces as the analyst attempts to replicate IT functions and use the technical tools directly, or the IT resource creates rules on the analyst’s behalf over and over via individual requests.

Our client, an enterprise healthcare payer/provider, was one of the organizations going through the process of modernization of their IT infrastructure and clinical delivery systems. The UM platform was replaced and interfaces had to be migrated. A new interface management and ETL tool, Snaplogic, was also implemented across the enterprise. As part of those efforts, they recognized that there was an opportunity to not repeat the past, and a need for a tool to migrate the interfaces to the new platform without repeating the process dilemma of purpose-driven interfaces. The purpose of the tool was to create a method for analysts to onboard customers and set up file transfers, or interfaces, without the need for purpose-driven coding.

Solution

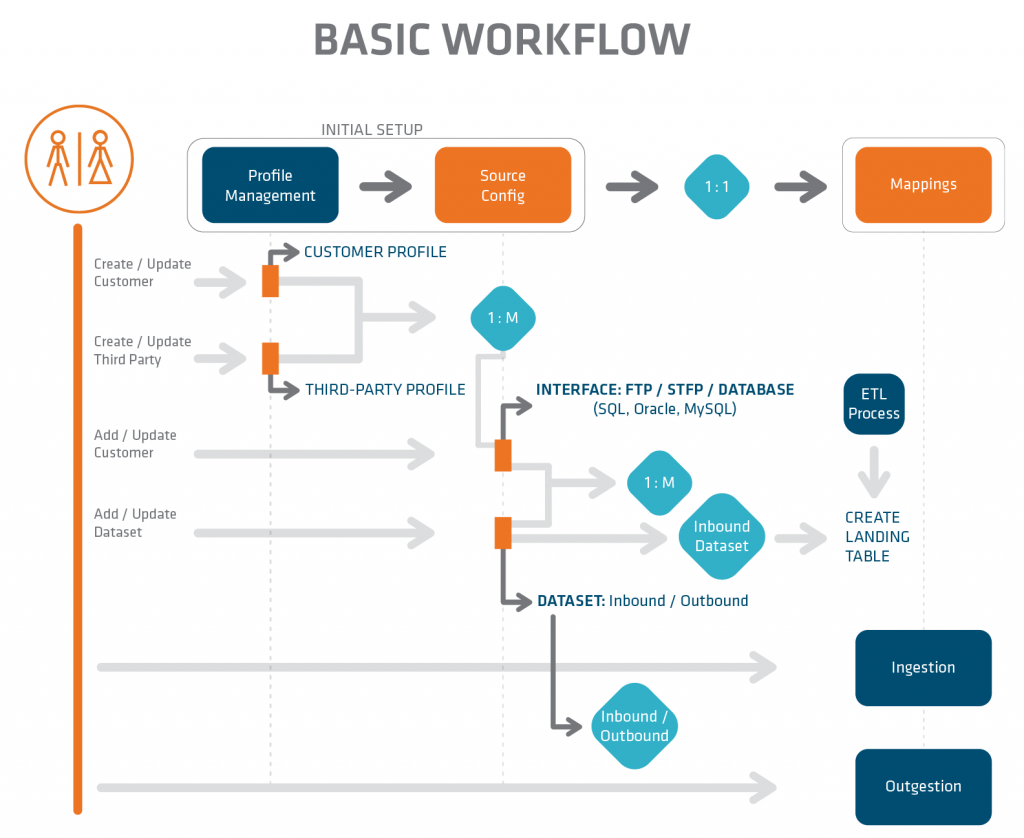

We were engaged to build a GUI-based ETL middleware tool which leveraged Microsoft SQLServer, Snaplogic and a .Net interface. Middleware, in this context, is software that operates between the data source and the target system and allows communication that is not dependent on the two systems having a common structure. The middleware solution acts as a translator between the two systems and transformations are typically applied as the data moves from system to system. Middleware solutions can also be made available to the analyst to apply their contextual knowledge without having the overhead of ETL and IT administration.

We started by examining the capabilities of Snaplogic and the existing processes to discover the business context and the actual rules. We then looked into applying a master set of rules globally but instead opted to design a framework so that, by entering configuration details into the application, complex and custom rules were applied to any incoming feed in a generic fashion. We also designed the capability of using MDM-governed or core tables to consolidate multiple, independent incoming feeds into a single stream to the target UM system. The configuration also includes steps to validate the incoming records and diverting potential errors to a path for correction.

Results

By using the application framework we developed, analysts are now able to set up new customer feeds and connect to sources, move and archive files, apply transformation rules, validate data, load to targets and manage job scheduling without manipulating the underlying infrastructure. The resulting tool is a significant reduction in both development resource cost and time to implement new, and eventually change existing, customers.

This innovative no/low-code approach keeps the technical delivery in the framework, which reduced the IT support load, and allowed analysts to create and manage the process without assistance in all but the most difficult scenarios. By consolidating the rules within the framework, auditing and reporting of the specifics was simplified and could be more readily understood by both technical and business users. This was accomplished by applying a generic approach to the process while still allowing individual customizations to be applied in a standardized format.

The engagement entailed extensive development, testing and component integration between the application, database and Snaplogic. This tool was not only delivered on time and on budget, but also met all functional requirements and attained all goals and objectives initially established by the client.

Contact us to learn more about partnering opportunities.

Scaling ServiceNow

Overview

Our client is a Fortune 500 payer and is a leading provider of traditional and consumer-directed healthcare insurance plans and related services. This includes medical, pharmaceutical, dental, behavioral health, long-term care and disability plans, provided primarily through employer-paid (fully or partly) insurance and benefit programs and Medicare. The organization’s network includes 22.1 million medical members, 12.7 million dental members, 13.1 million pharmacy benefit management services members, 1,200,000 health-care professionals, over 690,000 primary care doctors and specialists and over 5,700 hospitals.

At emids, we have a team of ServiceNow experts who help organizations implement IT Service Management solutions via the ServiceNow tool designed to boost IT productivity and performance, and the overall impact of IT, by automatically assigning mundane tasks such as routing requests to relevant assignment groups and auto-categorization.

The client had been utilizing our ServiceNow tool to manage information technology services for various subsidiaries for a number of years. However, following an acquisition by another large organization, they embarked on a series of integration projects that challenged the tool’s ability to scale quickly and effectively.

Three primary issues concerning the usability of the ServiceNow tool needed to be addressed:

- Lack of innovative speed and scale due to disparate ServiceNow instances. Use of different ServiceNow instances meant reliance on multiple point tools, making it difficult to support new innovation. Manually routing and categorizing incidents is quite time-consuming.

- Absence of visibility creating risk and keeping IT stuck in maintenance mode. The organization stored data in multiple systems, making it difficult to get a single, actionable view of their IT performance.

- Increase cost of ownership on old applications with outdated hardware. Significant time and resources were being spent maintaining existing IT systems without receiving new benefits.

Solutions

We developed three effective solutions that significantly increased the client’s usability of the ServiceNow tool.

First, we created a broad IT app portfolio on an extensible, scalable platform that future-proofs the organization’s investment. Our solution was to help the client easily extend its IT management capabilities without having to invest in costly and complex integrations and hardware. This was achieved by bringing data and processes into a single ServiceNow instance.

Next, we produced a single system of action and machine learning, allowing fragmented data to be replaced by automated work. We enabled the organization’s IT services to be managed from a single system of action with simplified reporting and machine learning, driving higher levels of automation.

Last, we achieved security and domain separation to ensure personalized services to subsidiaries. A domain-separated infrastructure made it possible to deliver services fast, with built-in proven practices, from a secure cloud with 99.995% uptime, all while ensuring employee records, service catalogs and workflows were customized to meet the needs of each subsidiary.

Results

Because of our proposed solutions, the organization can now:

- Drive digital transformation and new efficiencies with automation. We helped the company drive automation and gain more visibility into IT performance. By gaining more insight into service metrics, it became easier to implement new innovation and improve customer satisfaction.

- Deliver reliable IT services quickly from any device, anywhere, at any time. The client can now find and resolve IT issues more quickly than ever before.

- Manage continual improvement using built-in analytics and shift the budget to innovation. With a single ServiceNow instance, the organization realized infrastructure cost savings simply by having to pay for a single instance, saving up to $500k in the process.

How a Large National Payer Reaped the Benefits of Strategic DevOps

How a Large National Payer Reaped the Benefits of Strategic DevOps

Modern software and IT organizations are defined these days by Agile and DevOps. The goals are to increase efficiency, accelerate time-to-market for new applications and services and be more responsive to customer needs. In 2017, Forrester Research reported that 50 percent of enterprises are implementing DevOps in some fashion. However, progress is slower than it could be, because these methodologies require fundamental transformations in how team members work. As such, new tools, new skill sets, new processes and new mindsets are required.

For QA teams, specific hurdles include changing workflows to move testing earlier in the cycle, mastering technical tools needed for automation and working at a faster pace that requires more frequent collaboration. Oftentimes, organizations don’t have months to figure out how to transition, so they jump in with mixed results.

Our customer, a large national payer, decided to be proactive and establish best practices upfront. Like many large companies, they are in the midst of a massive digital transformation project. In 2017, the company launched an initiative to improve member engagement, coordination of care and quality of care across 20 million members. Digital tools such as interactive mobile apps and analytics are core to their strategy. The data coming from those programs will help them understand and manage populations for better outcomes. Given the fast pace of change in consumer technology, they needed to embrace Agile and DevOps models.

Project leaders knew that effective collaboration between all stakeholders including business analysts, QA and developers would be necessary. They wanted to implement two-week sprint cycles, incorporate big data technology and test across multiple platforms and devices. This was a lot to accomplish within those tight time frames.

As we began partnering with them on their DevOps transformation, we recommended a strategy that incorporated in-sprint automation and a shift-left orientation. This involved moving testing activities earlier in the sprint, aided by automation, so that QA could keep pace with the volume of test cases and catch problems immediately instead of during the next sprint. These practices align well with continuous development and continuous testing, hallmarks of DevOps. Plus, since automated testing tools typically require coding skills that most manual testers don’t have, we recommended a platform incorporating scriptless automation. That means testers can use business language to write cases, allowing them to ramp up quickly. We also integrated the automated testing platform with Rally to get automated results and defect reporting.

We were thrilled to learn that our customer realized some great benefits from this approach. The highlights included a 15 percent time savings in test planning, 60 percent time savings in regression testing, faster time-to-market and $500,000 annual cost savings by using a framework that eliminated the need to have deeper coding for every Quality Engineer. With a majority of the teams adopting in-sprint automation, they are well on its way to establishing a mature DevOps practice.

DevOps can be an exciting shift for everyone on the development team, including QA. People are able to implement new ideas faster and experiment with the latest technologies. Developers and testers can learn from each other to improve products on the fly, and end-users are happier. Ensuring that your team has the proper training, tools and senior-level support to make this shift will keep your best people on board and help the organization reap the benefits of DevOps and Agile faster.